Author: Zhenchi Zhong (Software Engineer Intern at PingCAP)

Transcreator: Charlotte Liu; Editor: Tom Dewan

TiKV is a distributed key-value storage engine, which is based on the designs of Google Spanner, F1, and HBase. However, TiKV is much simpler to manage because it does not depend on a distributed file system.

As introduced in A Deep Dive into TiKV and How TiKV Reads and Writes, TiKV applies a 2-phase commit (2PC) algorithm inspired by Google Percolator to support distributed transactions. These two phases are Prewrite and Commit.

In this article, I’ll explore the execution workflow of a TiKV request in the prewrite phase, and give a top-down description of how the prewrite request of an optimistic transaction is executed within the multiple modules of the Region leader. This information will help you clarify the resource usage of TiKV requests and learn about the related source code in TiKV.

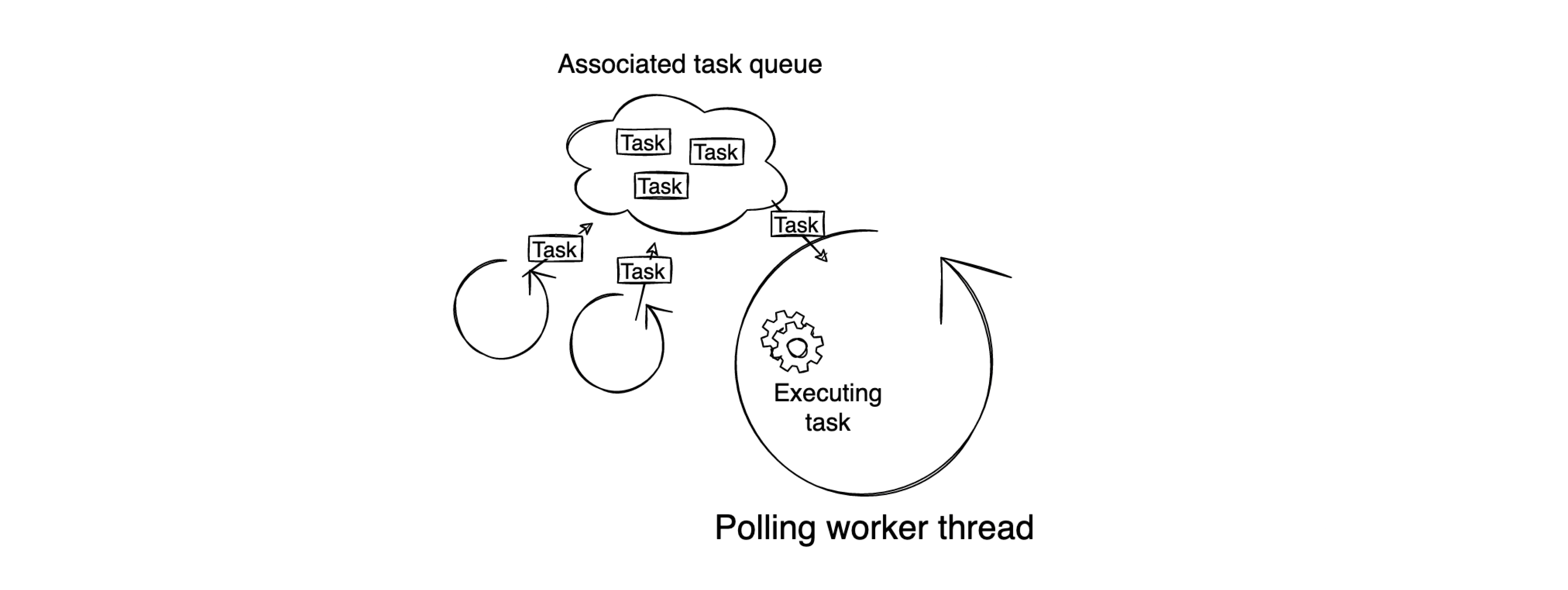

Work model

When TiKV is initialized, it creates different types of worker threads based on its configuration. Once these worker threads are created, they continuously fetch and execute tasks in a loop. These worker threads are generally paired with associated task queues. Therefore, by submitting various tasks to different task queues, you can execute some processes asynchronously. The following diagram provides a simple illustration of this work model:

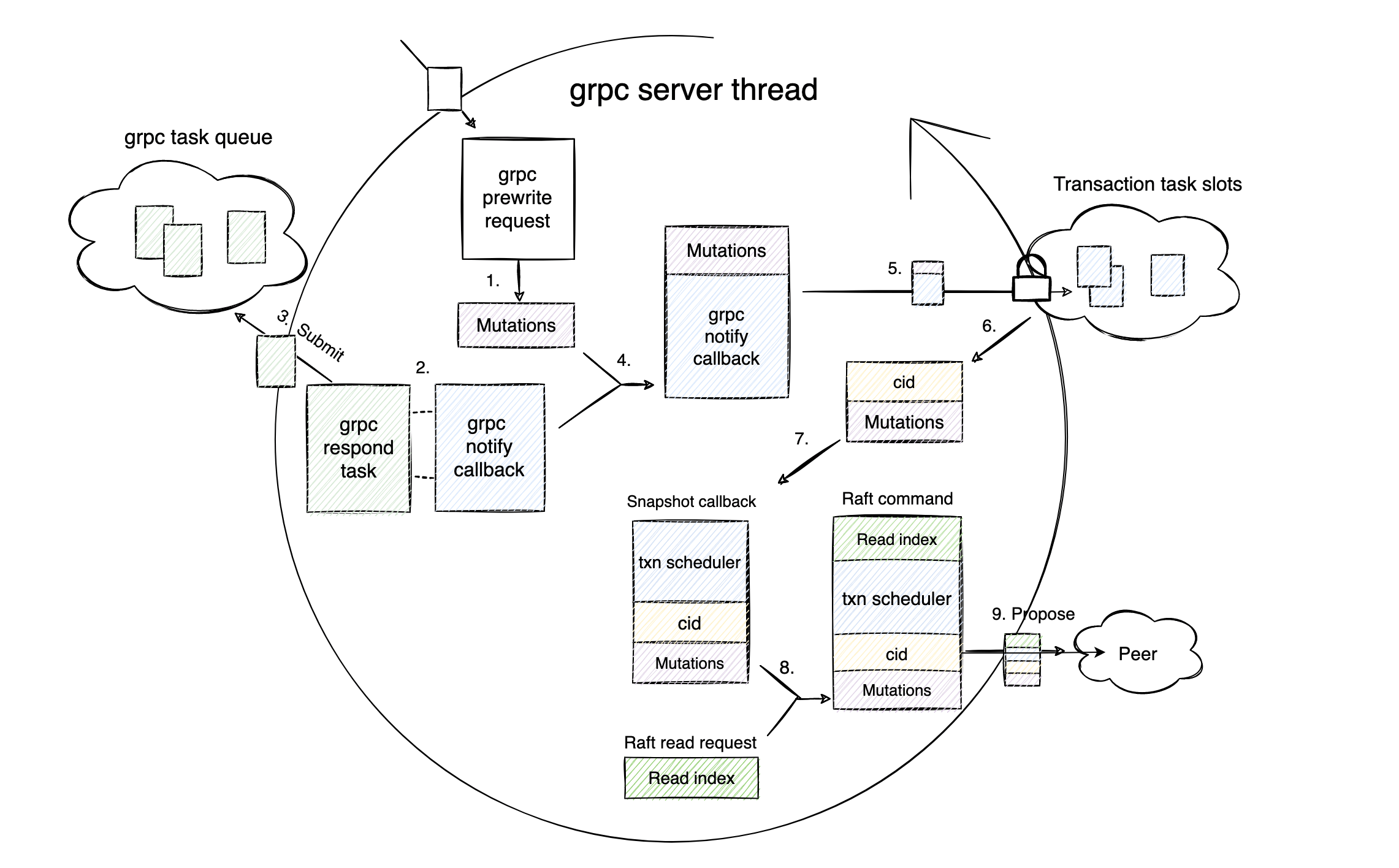

The gRPC Request phase

A TiKV prewrite request begins with a gRPC prewrite request from the network appearing on the gRPC server thread. The following figure shows the workflow in this phase.

It may not be easy to understand just by looking at the figure, so below I will describe exactly what the gRPC thread does step by step. The number of each step links to the corresponding source code:

- Step 1: Transforms the Prewrite protobuf message into Mutations that can be understood in the transaction layer. Mutation represents the write operation of a key.

- Step 2: Creates a channel and uses the channel sender to construct a gRPC Notify Callback.

- Step 3: Constructs the channel receiver as a gRPC respond task and submits it to the gRPC task queue to wait for notification.

- Step 4: Combines the gRPC notify callback and Mutations as a transaction task.

- Step 5: Gets latches for the Transaction layer and stores the gRPC notify callback in the transaction task slots.

- Step 6: Once the transaction layer successfully obtains the latches, the gRPC server thread continues executing the task with a unique

cidthat can be used to index the gRPC notify callback. Thecidis globally unique throughout the execution process of the prewrite request. - Step 7: Constructs the snapshot callback for the Raft layer. This callback consists of the

cid, Mutations, and the transaction scheduler. - Step 8: Creates a Raft Read Index request and combines the request with the snapshot callback to form a Raft command.

- Step 9: Sends the Raft command to the peer it belongs to.

The gRPC thread has completed its mission. The rest of the story will continue in the raftstore thread.

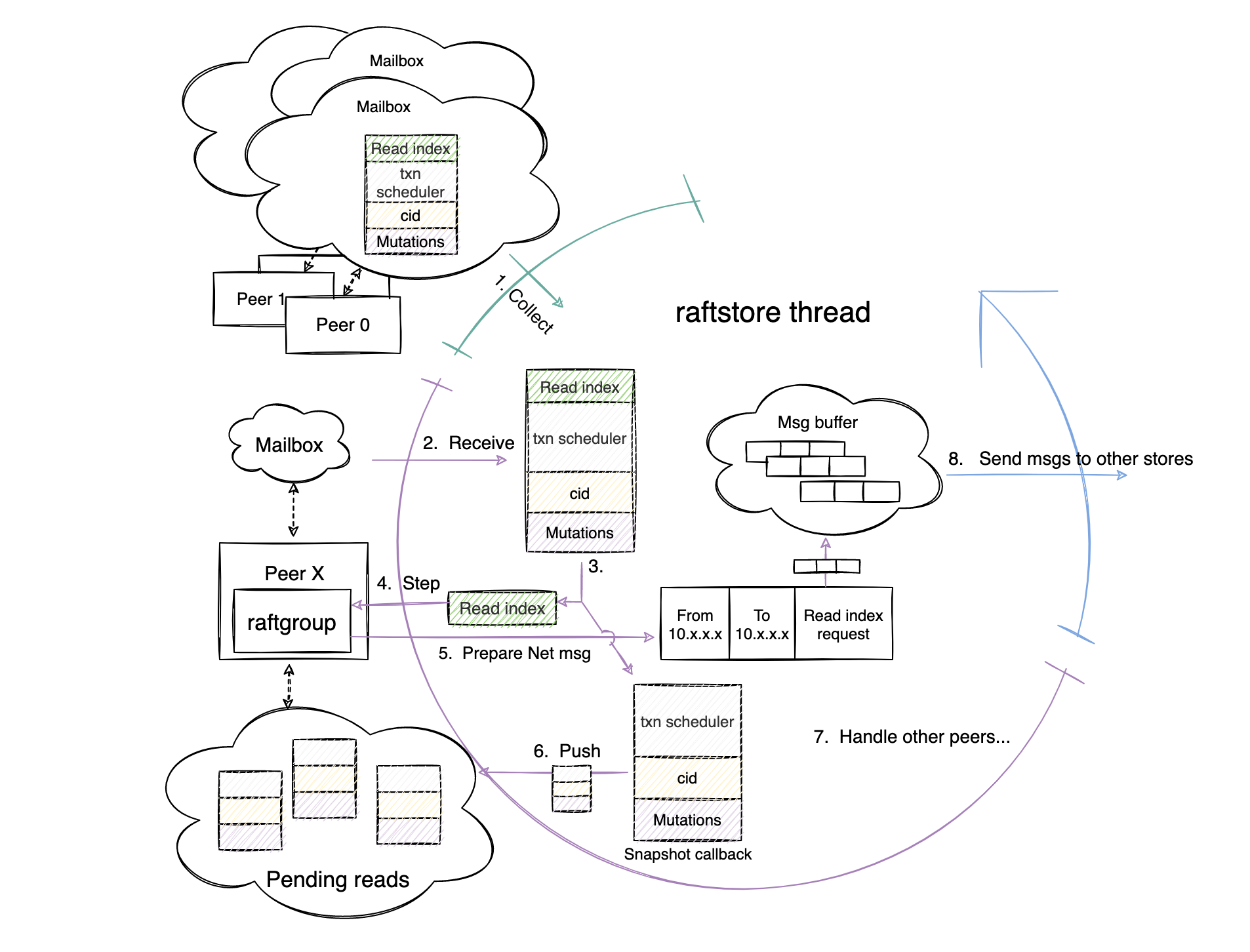

The Read Propose phase

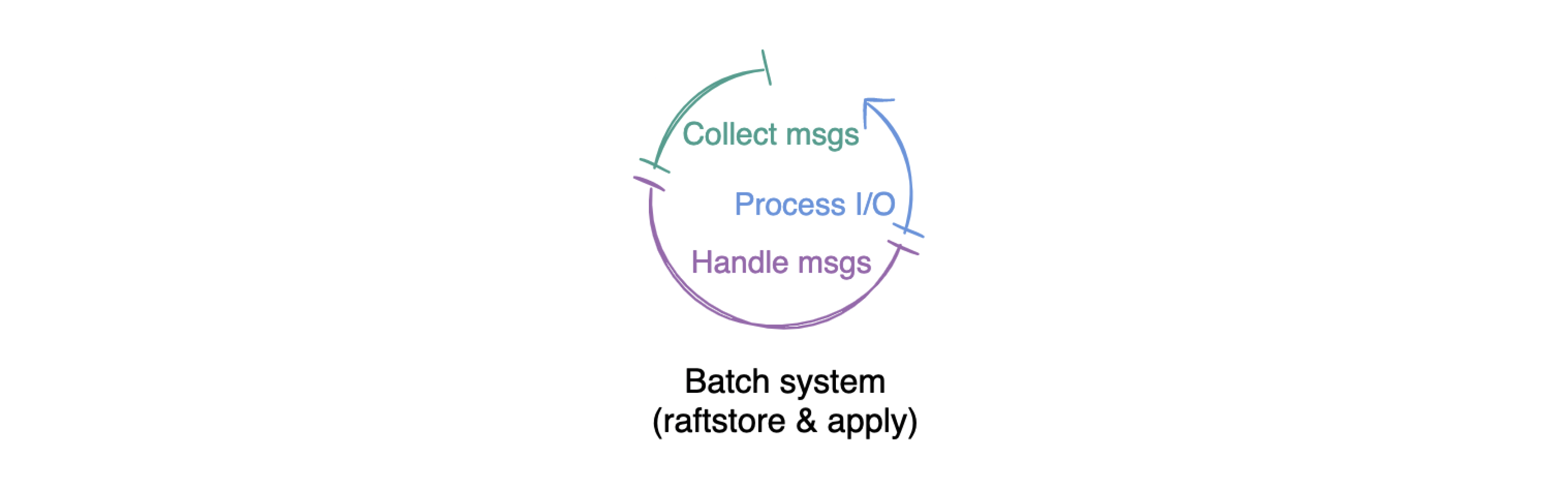

Before I introduce this phase, I’d like to talk about the batch system. It is the cornerstone of TiKV’s multi-raft implementation.

In TiKV, the raftstore thread and the apply thread are instances of the batch system. These two worker threads also operate in a fixed loop pattern, which is in line with the work model mentioned above.

The raftstore thread and the apply thread go through three phases in a loop: the collect messages phase, the handle messages phase, and the process I/O phase. The following sections describe these phases in detail.

Now, let’s come back to the story.

When the Raft command is sent to the peer it belongs to, the command is stored in the peer’s mailbox. In the collect message phase (the green part of the circle above), the raftstore thread collects several peers with messages in their mailboxes and processes them together in the handle messages phase (the purple part of the circle).

In this prewrite request example, the Raft command with the Raft Read Index request is stored in the peer’s mailbox. After a raftstore thread collects the peer at step 1, the raftstore thread enters the handle messages phase.

The following are the corresponding steps that the raftstore thread performs in the handle message phase:

- Step 2: Reads the Raft command out of the peer’s mailbox.

- Step 3: Splits the Raft command into a Raft Read Index request and a snapshot callback.

- Step 4: Hands over the Raft Read Index request to the raft-rs library to be processed by the

stepfunction. - Step 5: The raft-rs library prepares the network messages to be sent and saves it in the message buffer.

- Step 6: Stores the snapshot callback in the peer’s pending reads queue.

- Step 7: Returns to the beginning of this phase and processes other peers with the same workflow.

After the raftstore thread processes all the peers’ messages, it comes to the last phase of a single loop: the process I/O phase (the blue part of the circle). In this phase, the raftstore thread sends network messages stored in the message buffer to the other TiKV nodes of the cluster via the network interface at step 8.

This concludes the Read Propose phase. Before the prewrite request can make progress, it must wait for other TiKV nodes to respond.

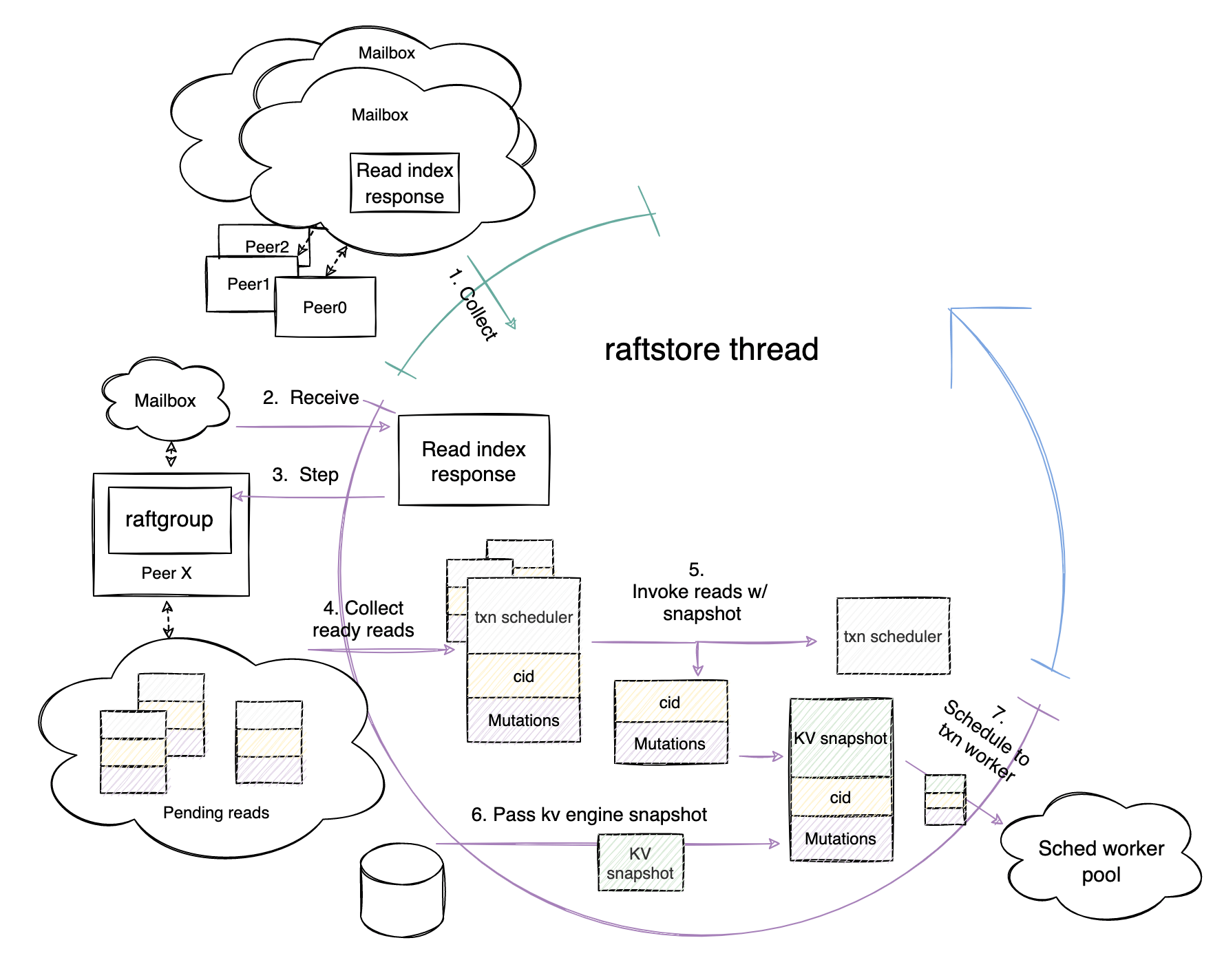

The Read Apply phase

After a “long” wait (a few milliseconds are actually long for a computer), the TiKV node that sends the network message finally receives responses from other follower nodes, and saves the reply messages in the peer’s mailbox. Now the prewrite request enters the Read Apply phase. The following figure shows the workflow in this phase:

The hardworking raftstore thread notices that there is a message waiting to be processed in this peer’s mailbox, so the thread’s behavior in this phase is as follows:

- Step 1: Collects the peer again when it loops back to the collect messages phase.

- Step 2: Same as that in the previous phase, the thread reads the reply message out of the peer’s mailbox.

- Step 3: Passes the message to the raft-rs to be processed by the

stepfunction. - Step 4: Identifies which read operations can be applied after the message is processed and collects the operations.

- Step 5: Invokes the snapshot callbacks which are temporarily stored in the peer’s pending reads queue.

- Step 6: Constructs and sends the snapshot of the KV engine to the snapshot callbacks.

- Step 7: Splits the snapshot callbacks into transaction schedulers and tasks consisting of snapshot,

cid, and Mutations. Then, the raftstore thread sends the tasks to the transaction worker threads according to the information recorded by the transaction scheduler.

This concludes the Read Apply phase. Next, it’s the transaction worker’s turn.

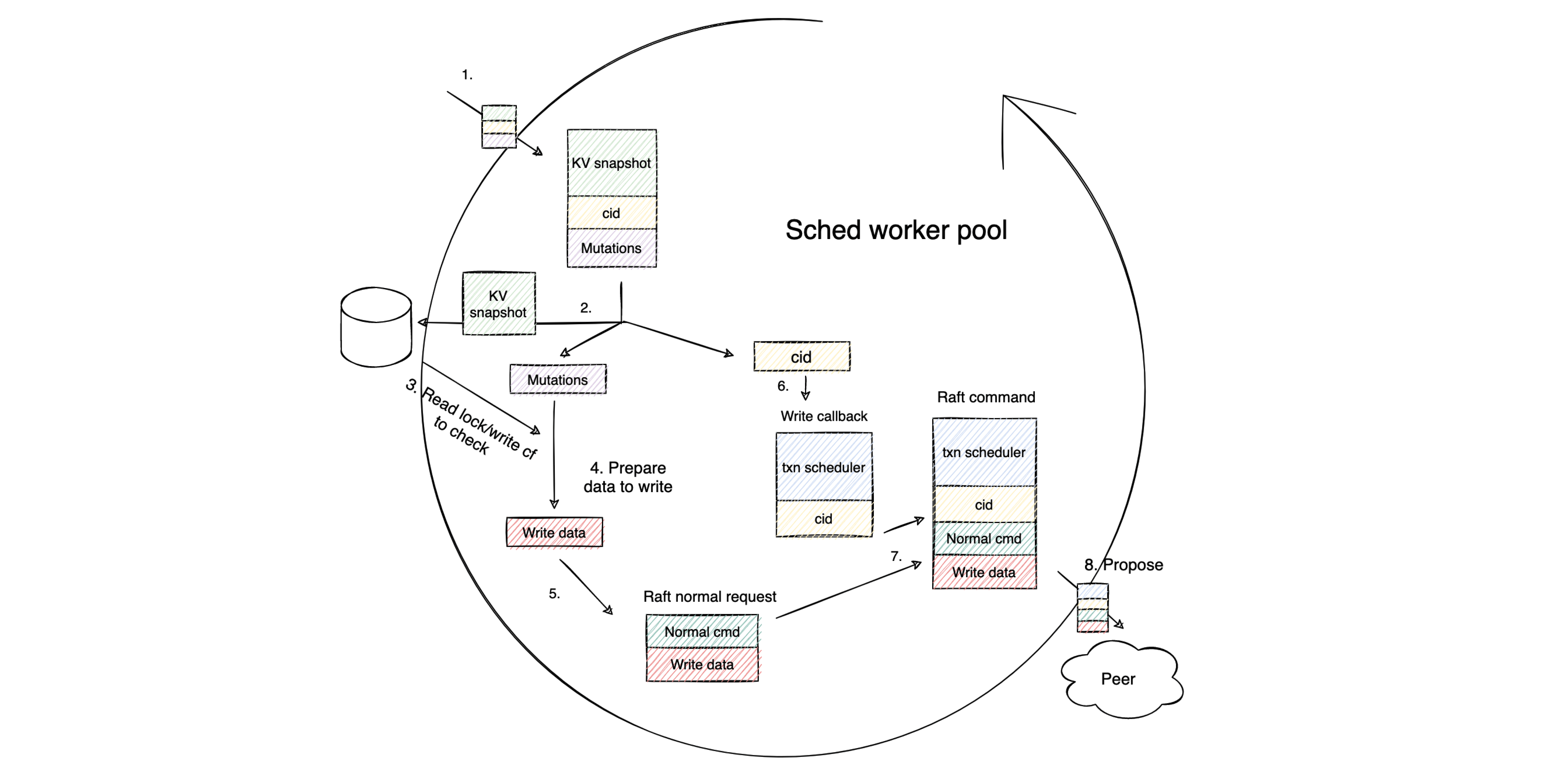

The Write Prepare phase

In this phase, when a transaction worker in the schedule worker pool receives the task sent by the raftstore thread at step 1, the worker starts processing the task at step 2 by splitting the task into the KV snapshot, the Mutations, and the cid.

The main logic of the transaction layer now comes into play. It includes the following steps performed by the transaction worker thread:

- Step 3: Reads the KV engine via the snapshot and checks whether the transaction constraints hold.

- Step 4: Prepares the data to be written for the prewrite request after the check is passed.

- Step 5: Wraps the data into a normal Raft request.

- Step 6: Prepares a new write callback with the

cid. - Step 7: Assembles the callback with the normal Raft request into a Raft command.

- Step 8: Proposes the command to the peer it belongs to.

The transaction layer logic ends here. This Raft command contains write operations. If the command runs successfully, the prewrite request is successful.

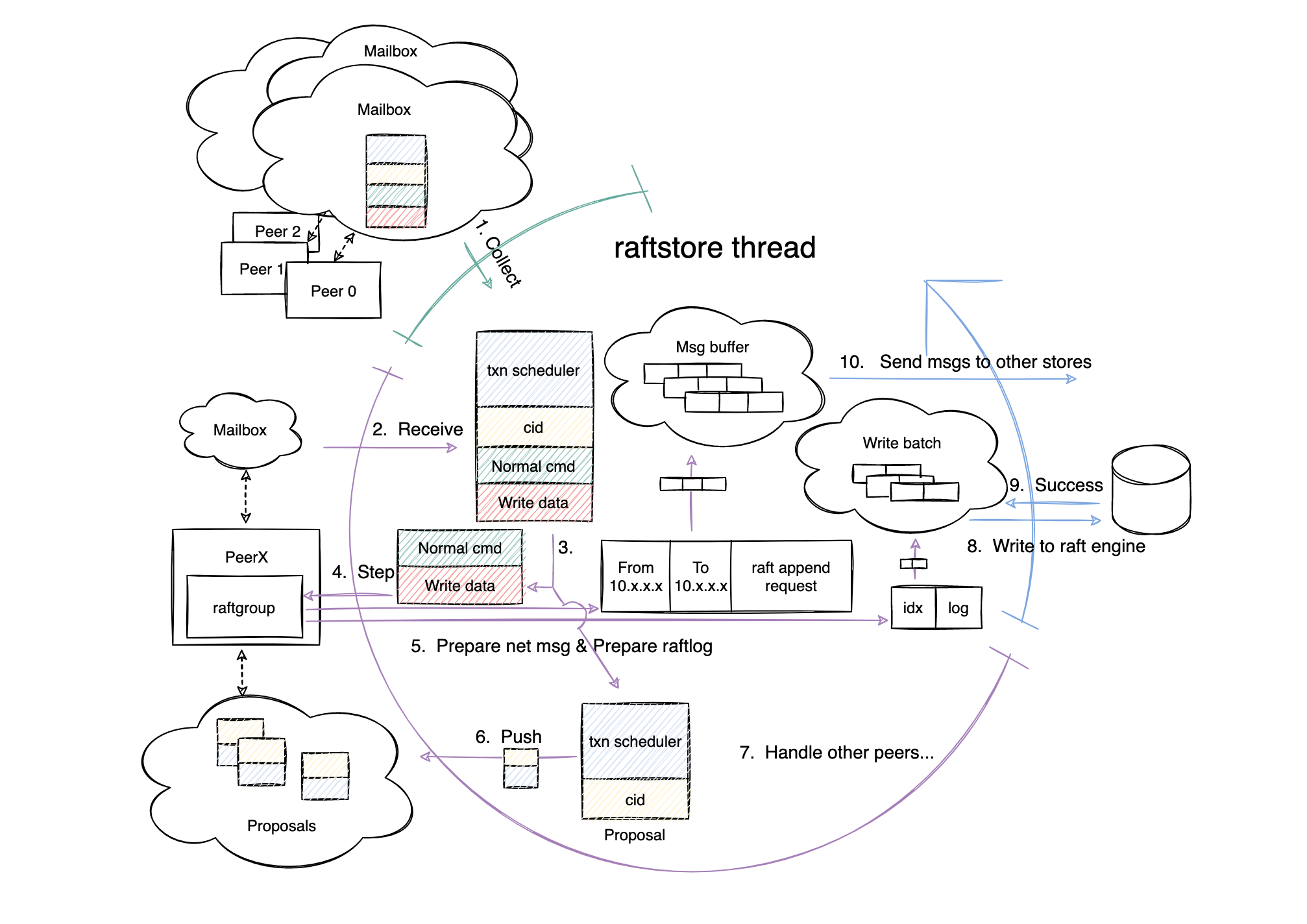

The Write Propose phase

Now it’s time for the raftstore thread to propose the write operations. The following figure shows how the raftstore thread processes the Raft command in this phase.

The first three steps in this phase are the same as those in the previous sections. I won’t repeat them here. Let’s go through the remaining steps:

- Step 4: raft-rs saves the net message to the message buffer.

- Step 5: raft-rs appends the Raft log to the write batch.

- Step 6: The peer transfers the write callback to a proposal and stores it in the peer’s internal proposal queue.

- Step 7: The raftstore thread goes back to process other peers with messages in their mailboxes.

- Step 8: The raftstore thread comes to the process I/O phase (blue part of the circle) and writes the messages temporarily stored in the write batch to the Raft engine.

- Step 9: The Raft engine returns that the messages are successfully written.

- Step 10: The raftstore thread sends the net messages to other storage nodes.

The Write Propose phase is over. Now, as with the end of the Read Propose phase, the leader node must wait for responses from other TiKV nodes before it moves on to the next phase.

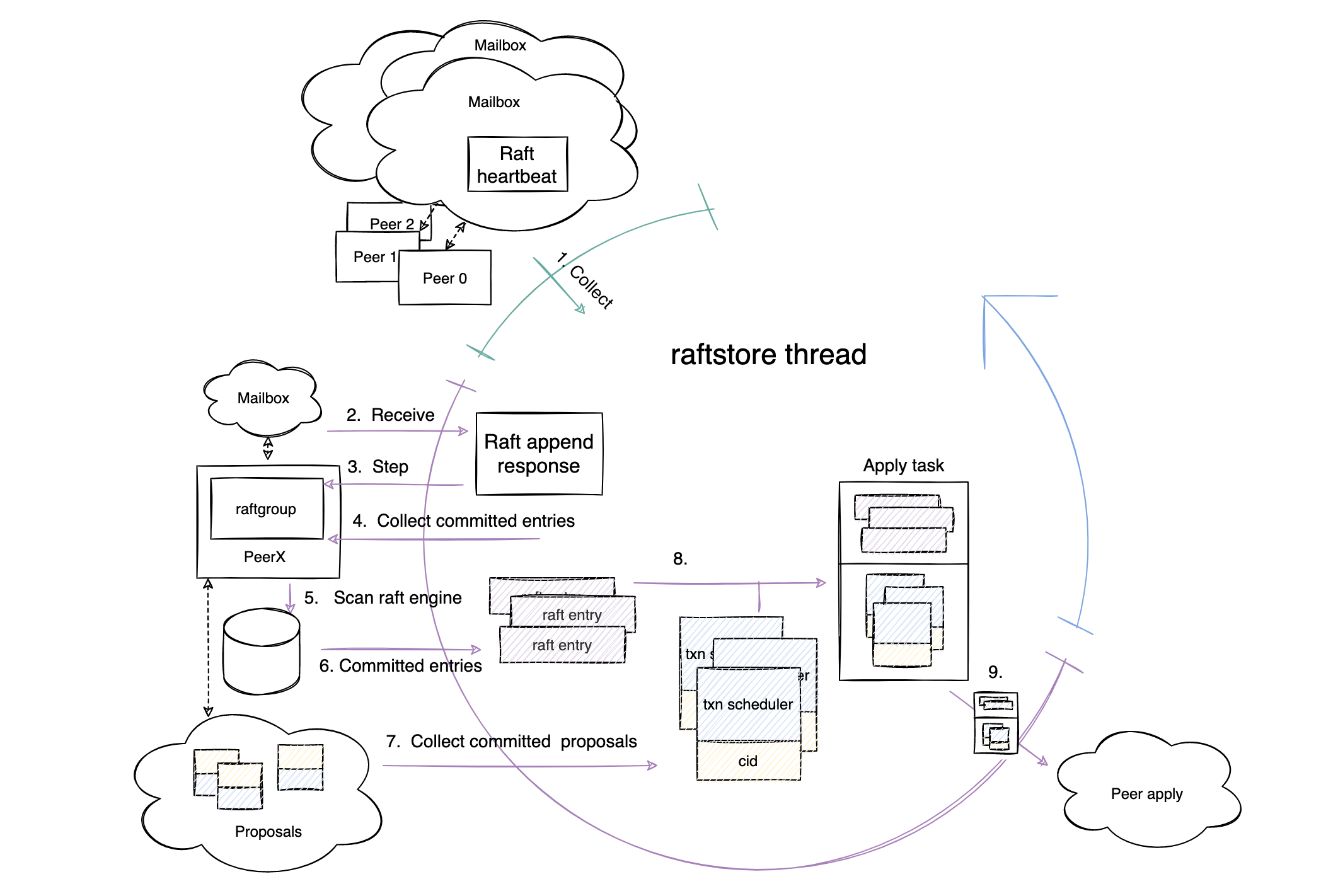

The Write Commit phase

After another “long” wait, follower nodes respond to the Leader node and bring the prewrite request to the Write Commit phase.

- Step 2: The peer receives Raft append responses from other TiKV nodes.

- Step 3: The step function processes the response messages.

- Steps 4, 5, and 6: The raftstore thread collects committed Raft entries from the Raft Engine.

- Step 7: The raftstore thread collects the proposals associated with the committed Raft entries from the internal proposal queue.

- Step 8: The raftstore thread assembles Raft-committed entries and associated proposals into an apply task.

- Step 9: The raftstore thread sends the task to the apply thread.

With the Write Commit phase coming to an end, the raftstore thread completes all its tasks. Next, the baton is handed over to the apply thread.

The Write Apply phase

This is the most critical phase for a prewrite request, in which the thread actually writes to the KV engine.

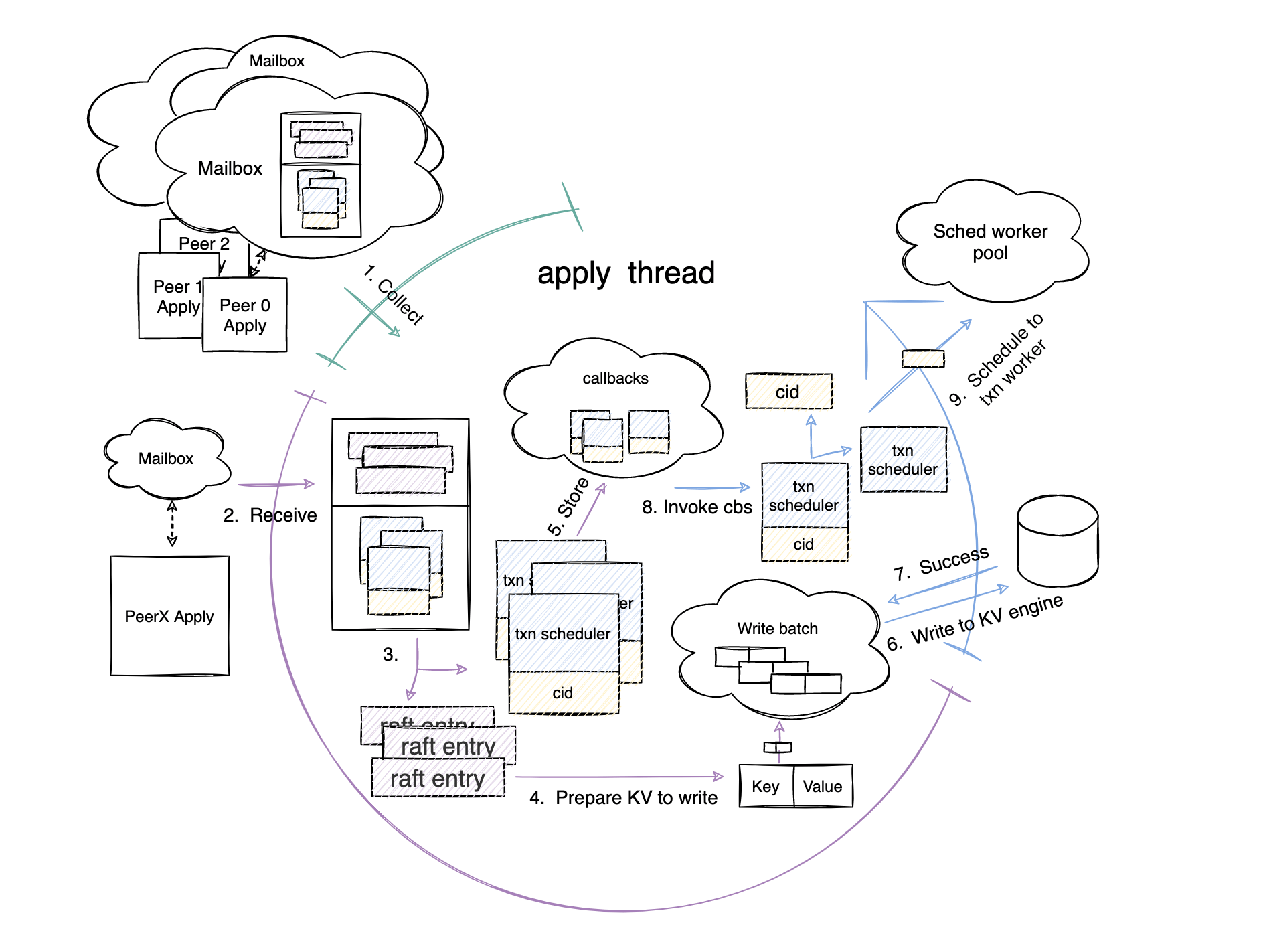

After the apply thread receives the apply task sent by the raftstore thread at steps 1 and 2, it continues with the following steps in the handle messages phase (the purple part of the circle):

- Step 3: Reads the committed Raft entries from the tasks.

- Step 4: Transfers the entries into key-value pairs and stores the key-value pairs into the write batch.

- Step 5: Reads the proposals from the tasks and stores them as callbacks.

Then, in the next phase (process I/O), the apply thread takes the following steps:

- Step 6: Writes the key-value pairs in the write batch to the KV engine.

- Step 7: Receives the result that the KV engine returns about whether the write operation is successful.

- Step 8: Invokes all callbacks.

When a callback is invoked, the transaction scheduler sends the task with the cid to the transaction worker at step 9, bringing us to the final part of the story.

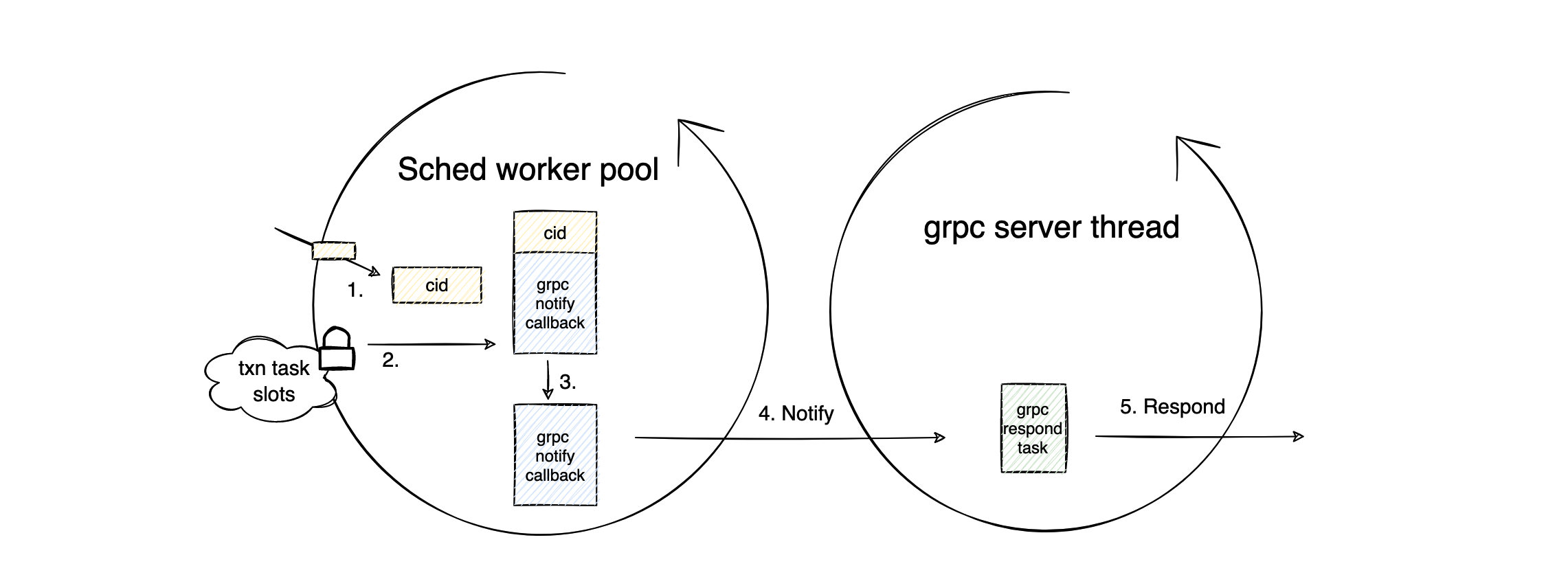

The Return phase

This is the final phase of the prewrite process. TiKV returns the execution result of the prewrite request to the client.

The workflow in this phase is mainly performed by the transaction worker:

- Step 1: The transaction worker gets the

cidsent by the transaction scheduler. - Step 2: The transaction worker accesses the transaction task slots with mutual exclusion using the

cid. - Step 3: The transaction worker fetches the gRPC notify callback which is stored in the transaction task slots during the gRPC Request phase.

- Step 4: The transaction worker sends a notification to the gRPC Respond task waiting in the gRPC task queue.

- Step 5: The gRPC server thread responds to the client with the success results.

Conclusion

This article introduces the eight phases of a successful prewrite request and focuses on the workflow within each phase. I hope this post can help you clarify the resource usage of TiKV requests and give you a deeper understanding of TiKV.

For more TiKV implementation details, see the TiKV documentation and deep dive. If you have any questions or ideas, feel free to join the TiKV Transaction SIG and share them with us!

TiDB Dedicated

A fully-managed cloud DBaaS for predictable workloads

TiDB Serverless

A fully-managed cloud DBaaS for auto-scaling workloads